If there were a single concept from economics I’d like to see more widely understood, it would be adverse selection: the tendency for markets to sort participants into worse and worse pools when one side of a transaction knows more than the other.

The idea originates in a 1970 paper by George Akerlof, “The Market for Lemons.” Akerlof modeled used car sales in a way that revealed how, under very common conditions, no one with a good used car would be willing to sell it. Sellers know more about their cars than buyers do, and so those with “lemons” will always be more desperate to sell than those with reliable vehicles. Since buyers can’t tell the difference, they will only purchase a used car on the assumption that something is wrong with it, and pay accordingly. As Akerlof puts it, “good cars may not be traded at all. The ‘bad’ cars tend to drive out the good” (489).

This is the core pattern: asymmetric information (one side knows more than the other) leads to adverse selection (the worse risks or lower-quality goods dominate the market) which can produce market unraveling (the market ceases to function well for anyone). That three-step sequence recurs across domains far removed from used cars. In each case, the same basic dynamic creates sorting problems that individual good faith cannot solve.

It is worth noting at the outset that markets sometimes generate their own responses to adverse selection. The used car market did not actually collapse: it produced warranties, certified pre-owned programs, CarFax, and lemon laws. A free-market economist would say that Akerlof identified a problem and then markets solved it through entrepreneurial innovation. This is a fair point, and it should be conceded. But the private-solution story works best in domains where the stakes are modest and the information gap is narrow enough for reputation mechanisms to bridge. Nobody invented a CarFax for health risk that prevented the insurance death spiral. Nobody developed a private warranty against neighborhood racial transition. The domains where adverse selection is most destructive tend to be precisely the ones where private solutions are weakest: the information gaps are widest, the stakes are highest, and the affected populations have the least market power to demand better terms.

Poverty and Insurance Markets

Akerlof applies the same model to car, life, and medical insurance: the more accident-prone, close to death, or sickly you are, the more desperate you will be to have insurance. But the more these “high-risk” individuals buy insurance, the higher the payouts, which drives up the average price above what healthier, younger, better drivers would choose to pay. Adverse selection takes over, and only the high-risk individuals end up insured.

The textbook version of this story is clean, but reality is messier in an interesting way. In practice, the people most likely to buy insurance are not just the sick; they’re also the risk-averse. Healthy people who worry about everything overinsure. They buy supplemental plans, max out their coverage, and renew faithfully. This actually helps stabilize insurance pools, because the worried-well subsidize the genuinely ill. The people who go without insurance tend not to be the healthy-and-rational actors of the economic model; they’re the young and cavalier, the overwhelmed, or the too-poor-to-bother. Adverse selection is real, but it operates alongside a countervailing force: anxiety. The question for policy design is which force dominates.

The underlying problem remains asymmetric information. Insurance purchasers know more about their own risks than the insurance company does. This is why life insurers try to get as much medical and genetic information about their enrollees as possible, and why car insurance companies use accident history, residential zip code, and miles traveled to price coverage.

It is also why the Affordable Care Act was designed to require “community rating”: forcing insurers to ignore most individualized risk information and instead treat communities as a single risk pool. Several distinct mechanisms work together here. Community rating prevents price discrimination against the sick. Subsidies stabilize the pool by making coverage affordable. The individual mandate discourages people from waiting until they’re desperately ill to enroll (the same waiting game that “pre-existing conditions” exclusions were designed to prevent, and that the ACA banned in favor of the mandate and financial penalty).

This is all well-known to anyone who has paid attention to health care policy over the last decade. But naming and framing the problem this way reveals why some solutions are superior to others, why even well-intentioned proposals can recreate the very dynamics they’re designed to prevent, and why dismantling these protections is so dangerous.

We are watching this play out in real time. The 2017 Republican tax bill zeroed out the individual mandate penalty, removing the main tool that kept healthy people in the insurance pool. During his first term, Trump slashed ACA outreach and advertising by 90% and cut the enrollment period roughly in half. This is a textbook recipe for adverse selection: the sick always know where to sign up, while healthy people, the ones whose premiums subsidize the pool, are the most likely to miss a shortened window or never hear about their options. By one estimate, these changes alone kept 500,000 people from enrolling.

The second term has been worse. Congressional Republicans allowed the enhanced premium subsidies to expire at the end of 2025, and the so-called “big, beautiful bill” cut over a trillion dollars in federal health care spending. New CMS rules on enrollment verification significantly disrupted automatic reenrollment, which had kept nearly 11 million people covered, though the full regulatory picture remains in flux amid litigation and rule revisions. The results are already visible: insurers’ list-price premiums rose an average of 26% for 2026, with increases exceeding 30% in states like Delaware, New Mexico, and Mississippi. For subsidized enrollees, the picture is even worse: with the enhanced tax credits gone, what households actually pay has in many cases more than doubled. The CBO projects marketplace enrollment will drop from 22.8 million to 18.9 million, with 4.2 million Americans losing coverage entirely because it has become unaffordable. Aetna has already exited the individual market, and three insurers have pulled out of Illinois, where rates jumped nearly 29%.

Each of these moves accelerates the adverse selection spiral the ACA was designed to prevent. Fewer healthy enrollees means higher average costs, which means higher premiums, which drives out more healthy enrollees. The pool gets sicker and more expensive. Insurers exit markets where the math no longer works. The people left holding coverage are the ones who cannot afford to go without it, paying more for less, in a market with fewer options. This is what adverse selection looks like when policy actively feeds it rather than fighting it.

Consider Bernie Sanders’ original Medicare for All Act. It went a long way toward eliminating selection effects by creating a universal federal entitlement with comprehensive benefits. But even this ambitious proposal preserved a significant state-administered role for long-term care, routing it through existing Medicaid channels with maintenance-of-effort requirements tied to state spending floors. This raises a familiar adverse selection worry. Long-term care is among the most expensive forms of health provision, and the populations who need it most, the elderly, the disabled, the chronically ill, are precisely those whose costs any system has the strongest incentive to contain. By leaving the administration of those costs in fiscally pressured state channels rather than absorbing them fully into the federal pool, even a nominally universal plan can create a two-track system: comprehensive federal coverage for the majority, and more constrained, state-variable care for those with the greatest needs. The risk-averse healthy people, the ones whose overinsurance stabilizes any pool they join, stay in the well-resourced federal track. The people who need the most help end up in the track most vulnerable to cost-cutting. Partial universalism can be worse than honest market segmentation, because it borrows the moral authority of universalism while quietly reproducing the sorting it claims to abolish.

Race and Real Estate

Adverse selection is part of a broader family of sorting dynamics that appear wherever people make choices under uncertainty using imperfect information. Akerlof recognized this himself: his original paper treats racial discrimination in labor markets as a case of the same phenomenon, where employers use race as a proxy for unobservable worker quality, and the resulting discrimination makes it harder for members of the discriminated group to invest in quality, confirming the original prejudice. The mechanism is simple. The outcomes are vicious.

Racialized housing markets in the US offer a vivid example of how these dynamics interact with, and amplify, deep structural racism. No economic model captures the full weight of what redlining and blockbusting did to Black communities, and there is a risk that naming these dynamics as “selection effects” makes them sound more orderly and less violent than they were. But the analytical lens is worth using precisely because it shows how racist outcomes reproduce themselves even when individual malice is not the proximate cause.

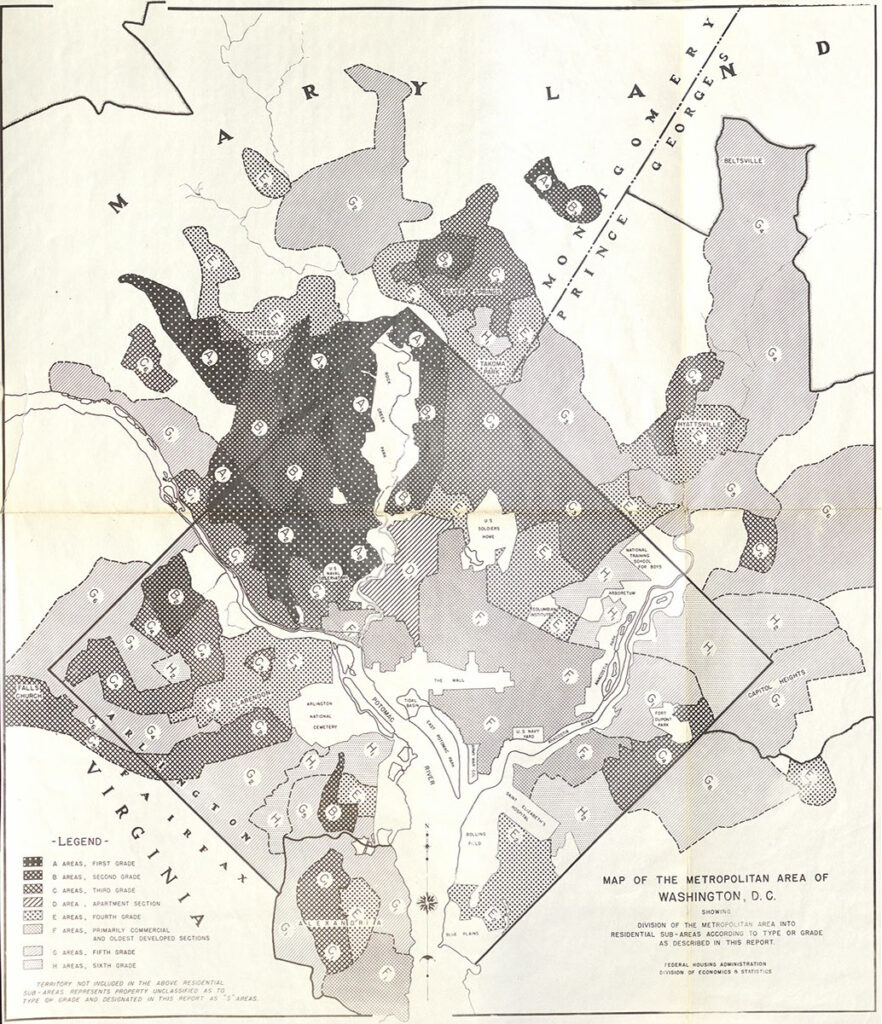

Consider how redlining and blockbusting worked hand-in-hand to prevent market-driven racial integration. (No story about race in America ever truly “begins” where we say it does; the history of slavery, Jim Crow, and white supremacy is ongoing and always in the background.)

It began when the Federal Home Loan Bank Board tried to determine whether some neighborhoods were too risky to finance. In the midst of the Great Depression, the most vulnerable neighborhoods were those primarily inhabited by African Americans: those who suffered most during the Depression were those who suffered most generally, so foreclosures were always worst in those neighborhoods. The maps drawn by the FHLBB were used by the Federal Housing Administration to dictate underwriting to private mortgage lenders. African Americans were barred from receiving federally underwritten loans. Private mortgage companies could still lend to them, but at much higher risk without federal insurance, and so they demanded a much higher premium.

A free-market critic would note, correctly, that the worst distortion here was government-created: the FHA drew the maps, and federal policy enforced the discrimination. This is true, and it matters. But the objection proves too much. Government created the information asymmetry; the market then amplified it through blockbusting, white flight, and self-reinforcing price spirals. Removing the FHA maps did not undo decades of wealth destruction. The damage compounds, and the compounding is a market process. The lesson is not that government intervention is always the answer, but that markets do not self-correct when the underlying information environment has been broken, even long after the original distortion is repealed.

Add to this the existence of “blockbusting” real estate agents, who used the threat of incoming African Americans to pressure white homeowners into selling. The fears were partly racial anxiety, but partly financial calculation: a “busted” block would lose considerable home value as wealthier whites fled and were replaced by African American buyers who had been shut out of better-financed markets. Even setting aside the racial animus, the financial incentives alone were sufficient to drive the sorting: any homeowner, regardless of personal attitudes, faced a real threat to her single largest asset. Real estate companies made a solid business of this practice, flipping houses from fleeing whites to middle-class African Americans for decades in places like Chicago.

While blockbusting sometimes looks like the just deserts of a racist society, where white people are so afraid of Black people that they willingly sell their homes at a loss, it exacerbated the underlying dynamics of segregation. The sorting was self-reinforcing: each departure confirmed the fears that motivated the next one, driving prices further down and concentrating poverty in the newly “turned” neighborhoods. The result was decades of wealth destruction in Black communities, even as the real estate agents who facilitated the churn profited handsomely.

Today we see related sorting dynamics working in the opposite direction: white homeowners return to the cities their parents fled, and expectations of neighborhood “improvement” drive transactions that accelerate displacement. The mechanism rhymes with blockbusting (speculation on racial neighborhood change) but the power dynamics are inverted. Capital flows in rather than out, and the displaced population, largely Black and largely renters, loses not just housing but neighborhoods, churches, schools, and the social networks that make a community function. They lack the market power that white homeowners had when they chose to flee. Some people may catch a windfall if they own property at the right moment of transition, but the overall pattern is the same: sorting by race and wealth, driven by information asymmetries and self-fulfilling expectations, producing outcomes that are worse for the most vulnerable.

There is a bitter irony here. Racial integration was the goal of decades of civil rights struggle. Now a version of it is arriving through market forces, and it is experienced, correctly, as displacement rather than progress. Integration through capital is still sorting. It replaces one racially stratified equilibrium with another, and the communities that fought hardest for integration bear the costs of its market-driven arrival. This is perhaps the clearest illustration of why adverse selection problems cannot be solved by markets alone: even when the market moves in the “right” direction, it moves on terms that reproduce the underlying injustice.

Test Scores and Schools

The connection between test scores and school segregation may be the clearest example of adverse selection logic outside insurance markets. Risk-averse parents cannot distinguish between two causes of low test scores: poverty and pedagogy. They tend to prefer schools whose students test well, treating scores as a proxy for teaching quality. Schools with lower test scores lose these risk-averse parents, who transfer their children elsewhere or simply move.

This has come to define real estate markets, with housing prices in desirable school districts dwarfing those in neighborhoods whose schools have historically underperformed on standardized tests. High-performing schools gradually accrue higher-income parents, while low-performing schools gradually accrue impoverished ones. Even childless homeowners prefer to live in high-performing districts, because of the relationship between school test scores and property values.

The sorting here is self-reinforcing in exactly the way Akerlof’s model predicts. The “good cars” leave the market, which makes the market look worse, which drives out more good cars. Parents who can afford to leave do; their departure further depresses scores; the next tranche of parents leaves. The schools that remain become, in effect, pools of adverse selection.

There are “community rating” equivalents: mixed catchment areas that require students of different races and incomes to attend the same schools. But the responses must be calibrated to account for consumer behavior. Parents can read catchment maps as well as any bureaucrat, and they will relocate for their children, to the suburbs or, in the most extreme cases, to private schools. After which they tend to oppose funding for the schools they have abandoned, creating a new cycle of sorting and disinvestment.

School choice advocates would argue that vouchers and charters are the solution: let motivated families escape failing schools. They are right that geographic assignment is an imperfect tool, and that trapping students in schools that do not serve them is its own injustice. But choice programs do not eliminate the sorting; they accelerate it with public funding. The families who exercise choice are disproportionately those with the information, resources, and engagement to navigate the system, leaving behind a more concentrated pool of the most disadvantaged students in schools with declining enrollment and declining revenue. This is adverse selection with a permission slip. The question is not whether parents should have options, but whether the mechanism for providing them creates a spiral that makes the remaining schools worse for the children who have the fewest options of all.

Employment and Credentials

The adverse selection dynamic also shapes the labor market. The General Education Development (GED) test was designed to help those who failed to complete a US high school diploma demonstrate mastery of equivalent material. High school diploma holders earn more than those without one, so if the value of high school is primarily learning, then exam-certified learning ought to perform just as well. Yet as Stephen Cameron and James Heckman have shown, it does not.

Part of the explanation is adverse selection. The GED has become correlated with incarceration, and criminal backgrounds are a cause for hiring discrimination and low wages. Once a credential is associated with a negatively selected population, its market value degrades, which further discourages anyone with better options from pursuing it. The pool gets worse; the credential’s value drops further; the cycle continues.

But most GED holders did not leave high school because of incarceration. They tend to be primary caregivers, to have lost a loved one, or to have been abused. These experiences also make sustained full-time employment difficult. The credential ends up carrying the weight of every reason someone might not finish high school, and employers treat it accordingly.

In 2014, Pearson VUE tried to fix this by making the GED significantly harder: computer-based, aligned to Common Core standards, with constructed-response questions replacing multiple choice. The logic was straightforward. If the credential’s value had degraded because the pool of holders was negatively selected, a harder test should produce a more valuable credential. The results were dramatic. Test-takers dropped by more than half nationally; completion rates fell 23 to 39 percentage points across every racial and ethnic group. By 2016, Pearson had to lower the passing score from 150 to 145, retroactively awarding credentials to people who had scored in between. The stated reason was revealing: students hitting 150 were actually outperforming high school graduates in college, suggesting the bar had overshot. Pass rates eventually recovered to around 80%, and Pearson’s own research claims 45% of passers enrolled in postsecondary programs within three years. But “fewer people take the test, and the ones who do are better prepared” is a description of adverse selection doing its work, with the credential’s gatekeepers actively assisting. The people deterred by a harder test are precisely the most marginal candidates, the population the GED exists to serve.

A related sorting dynamic appears in “ban the box” policies, which prohibit employers from asking about criminal history on initial applications. There is evidence that employers who cannot directly inquire into criminal backgrounds compensate by discriminating against all African American men. The structural logic is clear enough: employers substitute a racial proxy for the information they’ve been denied. But the structural logic does not exhaust the moral reality. This is racism, leveraging the machinery of adverse selection to do its work. The policy designed to help the formerly incarcerated ends up punishing an entire demographic, and the employers making these decisions are not innocent bystanders caught in a sorting trap. They are choosing the proxy. The sorting logic is relentless, yes, but the people operating it bear responsibility for the choices they make within it.

Solutions, or: Why Universalism Matters

So far as I can tell, adverse selection and its related sorting dynamics are natural byproducts of free choice under asymmetric information. This means the solutions must address one or both of those conditions. But the menu of responses is richer than it first appears.

Correct the information asymmetry. Force disclosures that help the less-informed party make better decisions. This is what insurance companies do when they demand medical histories, and what consumer protection laws do when they require used-car inspections or home disclosures. But disclosure is a double-edged tool: the same information that helps markets function can also enable discrimination.

Limit the sorting. Mandates, community rating, anti-discrimination rules, and mixed catchment areas all work by preventing people from separating into stratified pools. The individual mandate in the ACA is a pure example: you must participate whether you’re healthy or sick, which stabilizes the pool.

Provide the good directly. Where selection effects are severe enough, the most effective response may be to remove the market mechanism altogether. Public schools, single-payer health systems, and universal social insurance don’t just limit choice; they eliminate the market in which adverse selection operates. This is the strongest argument for universalism: it doesn’t try to outsmart the sorting. It refuses to sort. A fair objection: universal systems do not eliminate sorting entirely. They push it into different channels. Single-payer systems produce wait times, regional quality variation, and private supplementary markets. The NHS has private alternatives; Canadians cross the border for some procedures. But the sorting that persists under universalism is a different kind than the sorting markets produce. Under market sorting, the excluded population is the sickest and poorest. Under universal systems, the people who opt into private alternatives are the wealthiest. A system where the rich buy faster care is a far less harmful equilibrium than one where the poor are priced out of care altogether.

These three are the standard responses. But the examples above suggest that they are not always sufficient, and that more creative interventions are possible.

Change the incentives, not the information. Rather than hiding risk information from insurers or forcing everyone into a single pool, you can let everyone see the information but make it unprofitable to act on it. Risk adjustment transfers do this: insurers who attract healthier pools pay into a fund that subsidizes insurers with sicker ones. The ACA has a version of this mechanism, though it remains underdeveloped. The principle generalizes. School funding formulas that pay more per high-need student, enough to make “difficult” students a revenue source rather than a cost center, would transform the incentive structure that drives school sorting. Instead of fighting adverse selection, you make it financially irrelevant.

Change what information is legible. The essay has framed the choice as: reveal information or hide it. But there is a third option: change which information is visible. Raw test scores conflate poverty and pedagogy; value-added models that control for demographics would give parents a signal for teaching quality that doesn’t simply proxy for income. The GED carries a stigma because it is a single binary credential that cannot distinguish a formerly incarcerated person from a teenage caregiver. Richer signals, such as portfolio-based assessment, stackable micro-credentials, or apprenticeship records that carry narrative rather than just pass/fail, could break the pooling that makes the credential toxic. Adverse selection thrives on coarse information. Finer-grained information can disrupt the sorting without requiring either blanket disclosure or blanket concealment.

Design for precommitment. Adverse selection requires people to sort themselves after they know their type: sick or healthy, in a good school district or a bad one. If you can get people to commit before they know, the selection pressure disappears. Employer-provided health insurance partially works this way: you choose a job before you get sick, so the pool is not self-selected by health status. School assignment by lottery before test scores are published does the same thing. This is the Rawlsian insight as design principle: the veil of ignorance is not just a thought experiment but a template for institutions. Where you can build precommitment into the architecture, you defuse adverse selection at its root.

Recruit against the spiral. If adverse selection is driven by the exit of good actors, one response is to make staying, or entering, actively attractive to them. This reframes the ACA outreach cuts as even more destructive than the premium numbers alone suggest: outreach was not just informational but counter-selective, specifically targeting the healthy young people whose participation stabilizes the pool. Magnet programs in struggling schools follow the same logic. So do signing bonuses for teachers in high-need districts. And so does second-chance hiring: employers who actively recruit people with criminal backgrounds, GED credentials, or nontraditional career paths are not just doing social good. They are breaking the adverse selection cycle that degrades those credentials and populations in the first place. Every employer who hires a GED holder and has a good experience makes the next employer’s decision slightly easier. Counter-selection is contagious in the same way that adverse selection is; it just requires someone to go first.

Route around the degraded pool. When a credential or institution has been too badly damaged by adverse selection to rehabilitate, sometimes the answer is to build a new pathway rather than trying to rescue the old one. This is arguably what community colleges do for GED holders, what expungement does for criminal records, and what “housing first” does for homelessness services. You are not correcting the information asymmetry or forcing people to stay in a broken market. You are routing around it entirely. The risk is that the new pathway eventually suffers the same selection effects (community colleges themselves are not immune to this). But pathway replacement buys time and creates options that pure reform cannot.

Each of these approaches has costs, and none eliminates the underlying pressures entirely. Parents still move to better school districts; wealthy patients still seek private care; employers still find proxies. But the history traced above suggests that leaving markets to sort themselves, especially in domains as fundamental as health care, housing, education, and employment, reliably produces equilibria that are worse for almost everyone, and catastrophic for the most vulnerable. And the range of available interventions is wider than the usual debate between “let the market work” and “replace the market” would suggest. The most promising designs often work with the sorting dynamics rather than against them, redirecting incentives, enriching information, and recruiting the actors whose participation stabilizes the whole system.

The point of naming the pattern is to make it harder to ignore. Once you see adverse selection, you start to notice it everywhere: in the way dating apps stratify their users, in the way adjunct hiring degrades faculty quality, in the way nonprofit funding cycles punish organizations that serve the hardest cases. The concept doesn’t explain everything. But it explains a remarkable amount about why free markets, left to their own devices, so often deliver the opposite of what their advocates promise.